How to Diagnose and Recover From the Q1 2026 Google Quality Update

April 21, 2026 by Jairene Cruz-Eusebio 12 min readTable of Contents

ToggleThis Post is brought to you by Semrush.

Did your organic traffic collapse in Q1 2026, and you have no idea why even after checking Search Console?

It might be due to that January 2026 Google update.

Google actually has not confirmed or named the update, but affected sites continue to decline.

Let’s talk about what it actually is and what you can do about it if you’re affected.

Table of Contents

ToggleKey Takeaways

- No Manual Penalty Doesn’t Mean You’re Safe: Google flagged sites algorithmically. Traffic dropped up to 90% with no warning in Search Console.

- The Problem Is Your Whole Site, Not Just One Page: Google evaluated entire domains, not individual pages. Fixing a few articles won’t be enough if the bigger quality issue is site-wide.

- Google Rankings and AI Mentions Are Dropping Together: Sites that lost search traffic also stopped getting cited by ChatGPT and other AI tools at the same time. You need to track both.

- Your Lost Rankings Went to Big-Name Sites, Not Better Content: Reddit threads, TrustPilot pages, and major news sites took the spots you lost. This is parasite SEO, and it’s not going away.

- Publishing More Without Real Expertise Makes Things Worse: Covering topics your site has no track record in is now actively hurting you. Stick to what you genuinely know.

- Four Things Help AI Tools Cite Your Content: Named expert sources, direct answers, tight topic focus, and links from trusted sites. These apply to both Google and AI search.

- Audit First, Fix Later: Check content quality, ranking shifts, competitor gains, and AI visibility all at once. Each problem has a different solution, so you need to know which one you actually have.

TL;DR

| Structural change | What it means in practice |

| Content strategy = topical authority strategy | Stop publishing outside your verified expertise areas.

Volume without authority is now a liability |

| AI citation share is a first-class KPI | Track organic rankings and AI citation frequency together, not in separate reporting silos |

| Competitive intelligence must include authority-domain content | Map and monitor parasitic content on TrustPilot, Reddit, Medium, LinkedIn, and news publishers as permanent SERP fixtures |

What Happened and Why the Damage Pattern Is Different This Time

In Q1 2026, sites that had ranked stably for hundreds of keywords watched their traffic collapse overnight.

Not dip, but drop 70 to 90 percent.

There was no manual action, no penalty notice, and no explanation from Google.

Semrush Sensor data from January–February 2026 registered major ranking drops across 66% of monitored signals.

It’s the most extreme SERP volatility recorded in the prior two years, according to Semrush’s volatility index methodology.

MozCast confirmed the same window.

Barry Schwartz, founder of Search Engine Roundtable and the primary journalist covering Google algorithm changes since 2003, catalogued practitioner reports throughout the period and documented a consistent pattern: the damage runs along domain lines, not page lines.

Google appears to have evaluated entire sites based on overall content trustworthiness rather than individual pages.

Sites hit hardest fall into three categories:

- AI-generated content farms publishing across finance, health, travel, and software, with no genuine subject-matter authority;

- self-promotional listicles built for affiliate clicks, with no original research; and

- programmatic template sites generating thousands of pages with no editorial value-add.

What held steady were niche publishers with tight topical focus, verified expertise, and a consistent publishing history in a defined subject area.

The common thread is not AI—it is AI combined with an absence of genuine expertise.

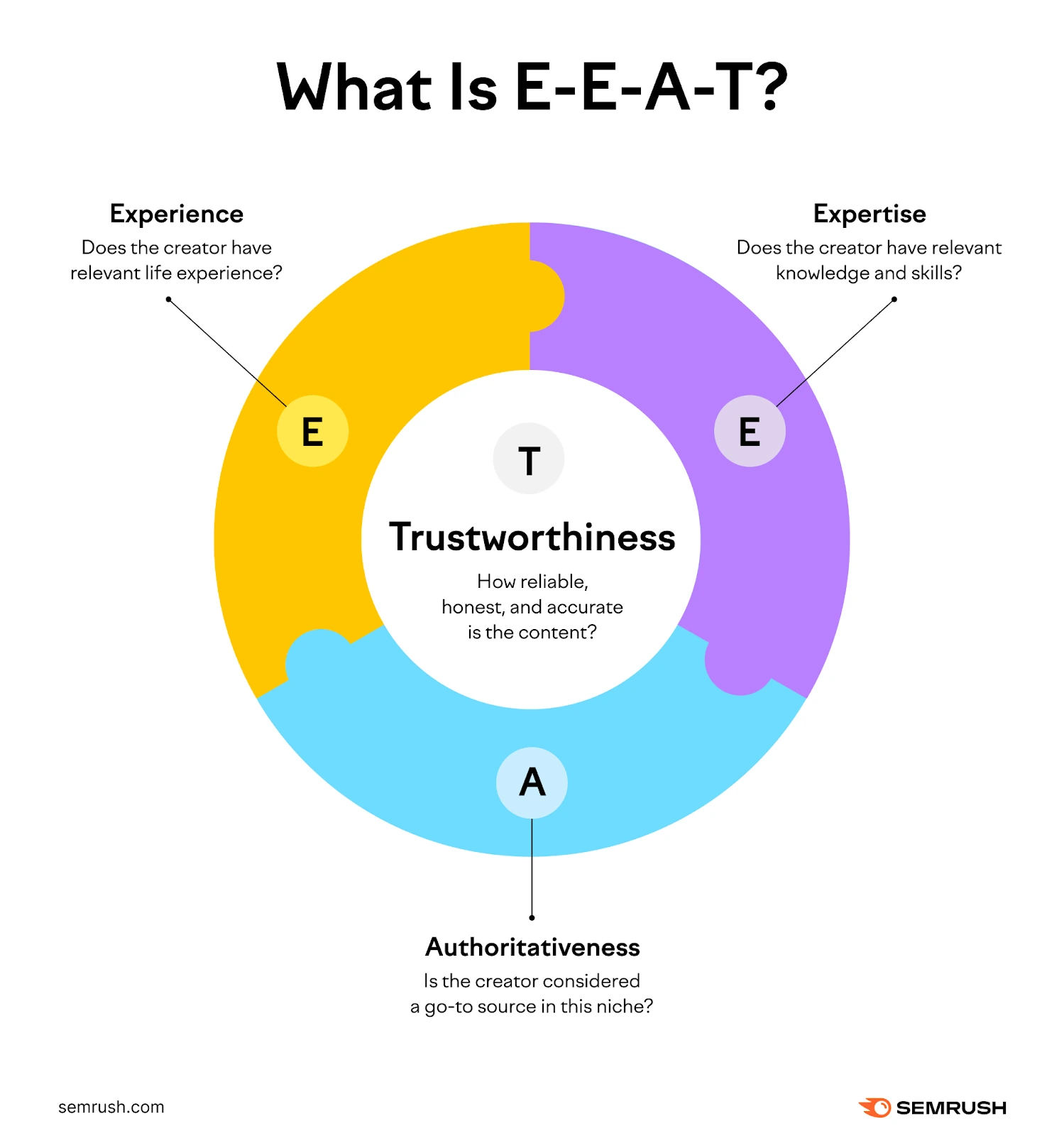

Google’s own E-E-A-T framework (Experience, Expertise, Authoritativeness, and Trustworthiness) appears to have been applied at the domain level in this update.

(Image source: Semrush Blog)

SEO practitioners have proposed three explanations, none mutually exclusive:

- a broad core algorithm reweighting,

- an adjusted review system targeting thin authority claims, and

- a scaled spam enforcement action against AI content published outside a site’s demonstrated topical authority.

What makes this update particularly frustrating is who filled the ranking gaps.

Not careful, expert-led publishers, but TrustPilot pages, Reddit threads, and news publisher sub-sections.

These domains rank not because their content is better, but because Google’s domain-level quality evaluation rewards accumulated trust signals regardless of page-level quality.

A thin listicle on a major news domain holds rank. An identical piece on a niche affiliate site collapses.

The differentiating variable is not the content.

It’s the host domain’s authority.

This is what SEO practitioners call parasite SEO.

And it means fixing individual pages will not solve a domain-wide authority deficit.

Are You Losing Visibility in Both Google and AI Search at the Same Time?

Yes, and the two losses are linked.

Lily Ray, Vice President of SEO Strategy and Research at Amsive, documented that sites losing organic visibility in this update simultaneously lost citation frequency in ChatGPT responses.

The same sites, the same timeframe, the same directional collapse across both channels.

That matters because AI citation share is now a first-class KPI alongside organic ranking share.

Content that fails to earn citations in AI-generated responses (from ChatGPT, Perplexity, Gemini, or Google AI Overviews) is losing brand presence in the discovery channel that increasingly sits between a user’s question and any destination website.

A rank-tracking dashboard shows the organic loss.

It does not show the AI citation erosion happening in parallel.

Without monitoring both, the true scale of the damage remains invisible.

Where Did the Displaced Rankings Go?

The rankings that left penalized sites did not disappear—they were redistributed.

Understanding exactly where they landed shapes every recovery decision that you should follow.

Rankings Moved Into 3 Destinations

1. Authority-Domain Content

TrustPilot, Reddit, aggregator pages, and news publisher listicles now occupy positions niche sites previously held.

2. AI Answer Panels

Google AI Overviews, ChatGPT, and Perplexity are absorbing queries that previously resolved to organic clicks.

3. Direct Competitors

Those with tighter topical focus and cleaner content histories who were not caught in the same quality evaluation.

Mid-tier and smaller publishers are now competing against parasitic content on high-authority domains for keywords they previously owned outright.

Want to recover your keyword ranking and traffic?

The first step is to identify exactly which keywords shifted, and to whom.

4 Content Signals That Enhance AI Citation Likelihood

Understanding what is being penalized is only half the picture.

There are four content signals that consistently increase AI citation probability across ChatGPT, Perplexity, Gemini, and Google AI Overviews.

These are:

1. Claims From Trustworthy And Named Sources

Because AI systems consider who is an expert when deciding if information is trustworthy, proof of expertise (like qualifications) should be linked directly to the facts you state, not just left in the author’s biography.

2. Clear Answers to Questions

The system prefers direct, factual statements that answer a question.

It usually ignores information that is unclear or uses words like “maybe” or “it seems.”

3. Staying Focused on One Topic

A website that writes about a specific, narrow topic shows real knowledge.

A site that tries to cover too many different things looks less expert.

4. High-Quality Links from Good Websites

Getting links from well-known and respected websites acts like a confirmation that your content is trustworthy.

This helps both regular search results and AI systems.

These ideas for SEO are not new.

Google has been moving toward them since the Helpful Content system started in 2022.

The important change is that these principles now affect two separate areas of visibility at the same time.

If you don’t follow them well, the negative impact is much stronger and hurts both areas.

Diagnosing the Impact of the Q1 2026 Google Update on Your Site

Google Search Console will show you the traffic decline, but it will not explain why it happened.

You won’t know if a website has a quality issue that Google hasn’t confirmed, and there’s no manual penalty or official announcement.

Experts need to figure out the problem by looking closely at how the website’s performance was hurt.

To do this, they must perform a four-part investigation.

Part 1: Checking Content Quality

Do a complete check of all pages on your website.

This will help you find:

- Pages with very little information (thin content).

- Pages that are exactly the same or very similar (duplicate content or near-duplicate templates).

- Pages with shallow information that are presented as high-quality articles.

- Website addresses (URLs) that are structured in a way that weakens your site’s authority on a topic.

These issues on individual pages add up to a poor quality score for your entire website.

Teams often don’t realize how widespread these problems are until a full website check reveals the true extent.

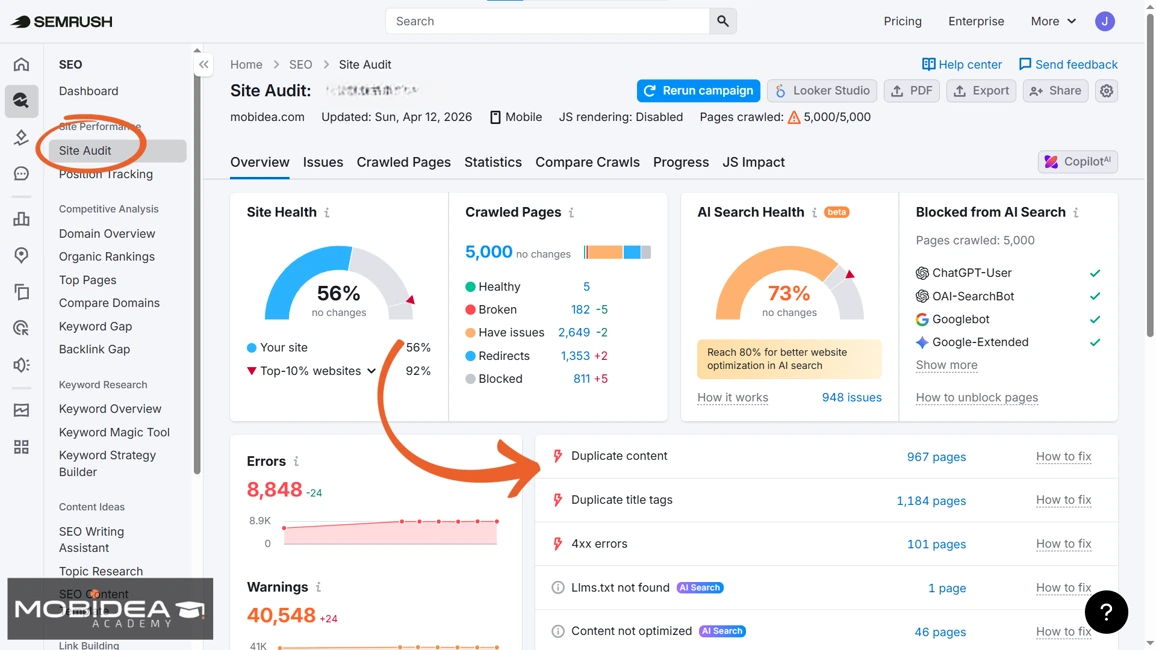

We recommend using Semrush One’s Site Audit for this task.

Here’s how to use it:

- Open Semrush One and navigate to the Site Audit tool.

- Click on the “Create SEO Project” button.

- Enter your domain to initiate a full crawl.

Once the crawl is complete, filter flagged issues by severity and prioritize thin pages, duplicate structures, and low-depth editorial content.

These are the most likely contributors to a suppressed domain quality signal.

Re-run the audit after each round of remediation to track whether your site health score is improving.

Part 2: Verifying Ranking Changes

You should find the groups of keywords that started losing rank first.

Seeing if the decline was slow and happened over a long time (a chronic problem) or if it was a fast, big drop (an acute problem) will help you know how to fix it.

The solutions for these two are different.

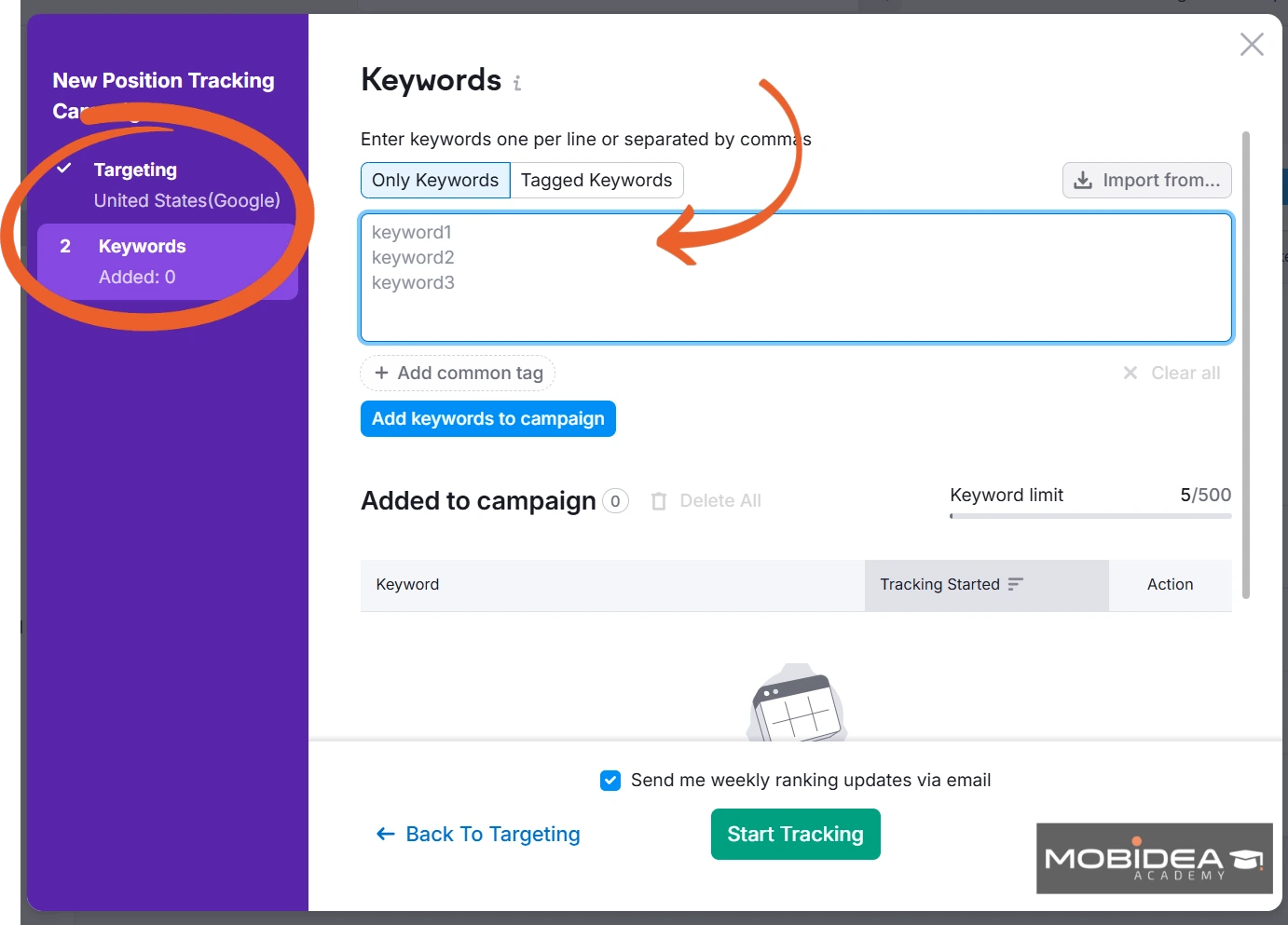

For this task, we recommend using Semrush One’s Position Tracking, which is still located under SEO in the dashboard.

Follow these steps:

1. Set up a Position Tracking project for your domain.

2. Add the keyword clusters most relevant to your business.

3. Use the timeline view to isolate the drop window against the January–February 2026 period.

4. Distinguish between a sharp concentrated drop (update-specific damage) and a gradual multi-month slide (pre-existing decay).

Part 3: Investigating What Your Competitors Did Better

Just knowing that your website rankings dropped isn’t enough.

You need to know who took your rankings.

If a direct competitor with similar content is now ranking higher, it means your content isn’t good enough anymore.

You can fix this by improving your content.

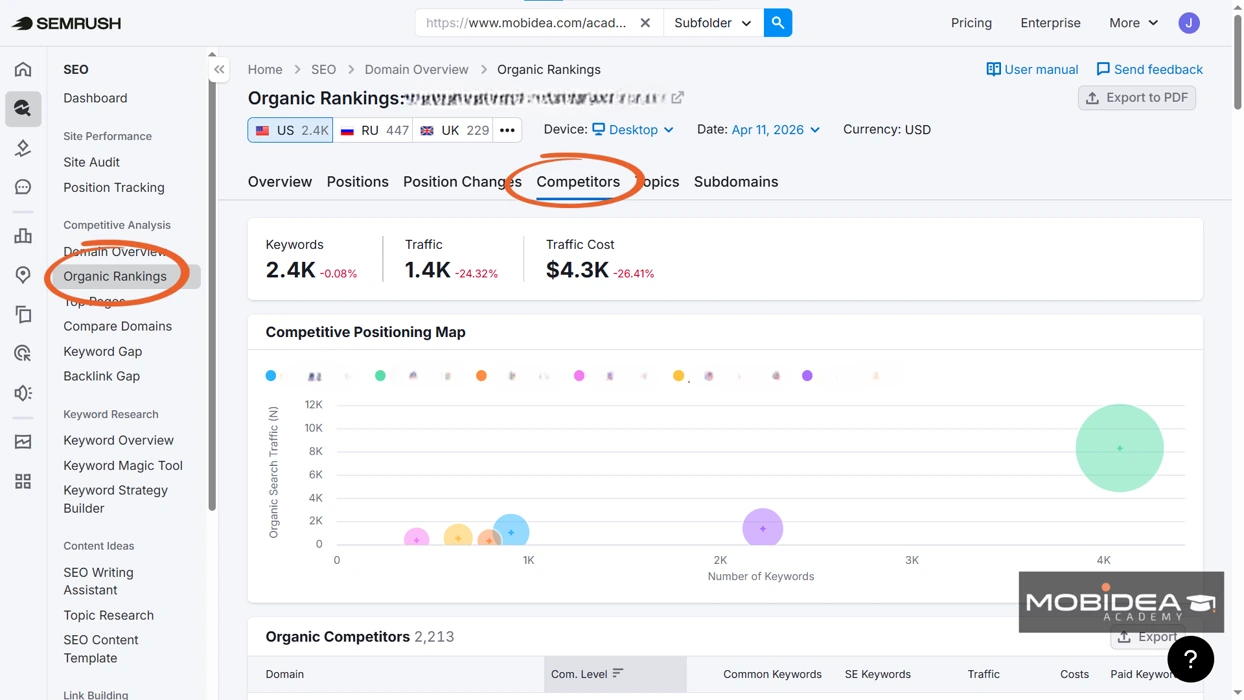

To check, use Semrush One’s Organic Rankings tool.

- Navigate to the Organic Research tool and enter the domains that took over your former keyword positions.

- Include TrustPilot, Reddit, or major news publishers if they have appeared in your lost rankings.

- Review the pages generating the highest visit volume to identify which content types are being rewarded in the post-update landscape.

- If the same authority domain is gaining across multiple keyword clusters you lost, check the website’s pages and include it in the list of competitors to monitor.

If you have been replaced by a website that uses parasite SEO (Reddit, Medium, Linkedin, etc.), then simply making your content better won’t be enough.

This situation shows that your website lacks the necessary overall authority.

You need to take steps to improve your overall website ranking.

Additionally, you can utilize the said website to gain back traffic to your website by participating with it.

For example, if it’s Reddit, maybe it’s about time you interact with your audience there, and from time to time (not always), recommend your website or pages that are relevant to the discussion.

If it’s Medium or Linkedin, you can also use these platforms to share original content that links back to your website.

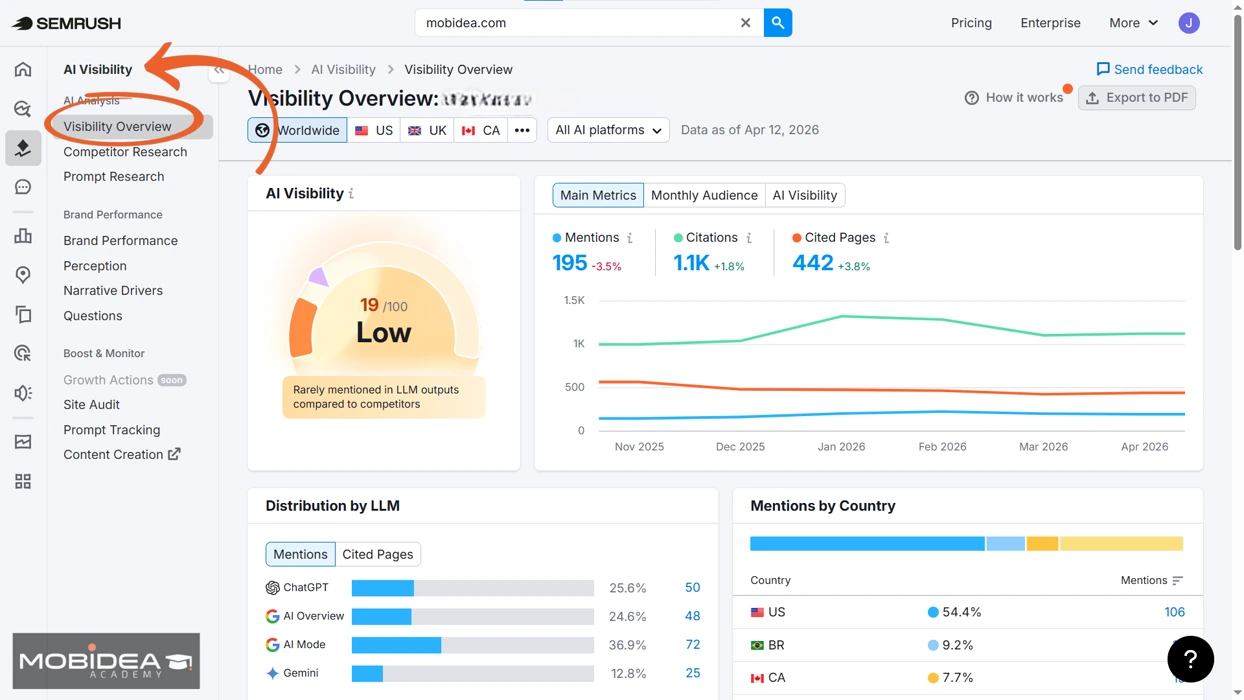

Part 4: Monitoring Your AI Visibility

Don’t think the problem only revolves around how your website appears in Google search.

It is also a wise move to check if your brand is still being mentioned in answers created by AI for the topics you used to rank for.

If AI mentions are dropping at the same time as your Google rankings, it means the quality problem is happening in both places.

If so, fixing the problem requires improving the overall trust and authority of your website, not just changing your content.

How do you know for sure?

Use Semrush One’s AI Visibility Toolkit.

- Open the AI Visibility Toolkit and review your Visibility Overview score.

This is the frequency with which your brand surfaces when users query AI platforms on topics related to your industry.

- Use Competitor Research to identify which brands AI platforms are recommending instead of yours.

Review Brand Performance to track mention frequency over time across each AI platform.

If organic rankings drop at the same time as performance in other channels, it means there’s a quality problem across the board.

- Use the Questions tool to find what users are actively asking that AI systems have not yet found a satisfactory answer for.

These are your content creation opportunities.

What To Do With Your Content After a Quality Update

Once the audit is complete, every page on the affected domain falls into one of three categories.

| Category | Where to apply | Action |

| Consolidate or redirect | Pages that are:

|

Leaving this content online while you try to fix other pages may hurt your recovery efforts. |

| Rehabilitate | Pages about very important topics that have real, helpful information but are not written well. |

|

| Protect and amplify | Content that performs well in topics where the website is a recognized expert |

This prevents the weaker parts from lowering the overall quality score. |

Three Structural Changes Every SEO Team Must Internalize

The Q1 2026 update shows a clear direction, it’s not just a one-time problem.

Your content plan and your strategy for showing deep expertise on a subject are now completely connected.

Writing a lot of content without proving you are an expert is harmful.

The important question is no longer how much a site publishes, but whether Google has proof to trust the site’s knowledge on those published topics.

AI citation share is now as important as organic ranking share.

If your content is not cited in answers generated by AI, your brand is losing visibility in the way people will increasingly find information.

Therefore, you must track organic ranking share and AI citation share together, not in separate reports.

After the Google update, you must now pay attention to content from very well-known, authoritative websites.

Content from “parasitic” sites (like TrustPilot, Reddit, and big news sites) that ranks for your terms is a permanent part of Google’s search results—it won’t just disappear.

You need to identify, track, and include this content in your plans for what you write and how you try to recover your own rankings.

Frequently Asked Questions

What caused the Google traffic to drop in Q1 2026?

Websites that made a lot of content using AI or automated templates, especially in topics they weren’t experts in, were affected by a Google quality check.

This unofficial evaluation caused some sites to lose up to 90% of their traffic from search results, even though Google didn’t give them a manual penalty.

Did Google confirm the Q1 2026 update?

No. Google issued no statement, no update name, and no remediation guidance.

Why is my Google traffic down but I have no manual action in Search Console?

Algorithmic quality evaluations do not trigger Search Console notices.

A sharp traffic drop with no alert points to a domain-level quality signal, not a manual penalty.

What types of sites were most affected?

Sites publishing AI-generated content across unrelated verticals, affiliate-driven listicles, and programmatic templates at scale were hit hardest, particularly those operating outside their verifiable areas of topical expertise.

What is parasite SEO?

Parasite SEO is content hosted on high-authority domains like LinkedIn and Reddit that ranks by borrowing the host domain’s accumulated trust signals, regardless of the individual page’s quality.

Why is parasite SEO surging after the Q1 2026 update?

When smaller websites were punished and lost their positions in search results, big, well-known websites took their places.

These big websites often filled the empty spots with content that would have been judged as poor quality if it had come from the smaller publishers.

How do I know if my brand is losing visibility in AI-generated answers?

Use a dedicated AI visibility tool like Semrush One’s AI Search Results. Standard rank trackers will not show AI citation loss because the two measurement systems are separate.

What is the first step to recovering from an unconfirmed Google update?

Before you change any content, do the four-step website check at the same time:

- checking content quality,

- verifying ranking changes,

- investigating competitors,

- monitoring AI visibility.

This check will tell you if your problem is about content quality, not enough authority, or a problem with showing up across different channels, and each problem needs a different solution.

Does proactive monitoring help prevent future update damage?

Yes. Semrush Sensor data from the Q1 2026 period shows that sites with daily tracking already in place responded faster and with more diagnostic clarity than those building monitoring infrastructure after the fact.

Jairene is a writer that has been in the field of affiliate marketing since 2013. She is a digital marketer, an Industrial Engineer, and a Published Author, all in one! Jairene knows a lot about the Performance Marketing industry and she's very eager to share them all here, so stick around!

There's a new challenge: How do you optimize content so AI tools actually cite your brand? Let's talk ways in this blog post.

Stop publishing more, rank better instead! Discover how Semrush One diagnoses and helps you fix your content volume trap.

These SEO Chrome extensions to make your work easier are gonna be everything you'll ever need to make your SEO game become the best of all time!